It appears that Norway is following Sweden's lead [previous post] by implementing a national plan to reduce greenhouse gas emissions.

The Norwegian government appointed the Norwegian Commission on Low Emissions on March 11, 2005 and charged the Commission with the task of preparing scenarios of how Norway can reduce its emissions of greenhouse gases by 50-80 percent by 2050.

The Commission presented their final report to Minister of the Environment Helen Bjørnøy on October 4th 2006.

The Commission projected a reference scenario for how emissions growth would occur absent any reduction plan. Norway's greenhouse gas emissions in the reference path reach about 70 million tons of CO2 equivalent by 2050, according to the Commission. About three quarters of the emissions in this scenario are distributed fairly evenly between electricity production, industrial processes, and transportation. The remaining emissions will come mainly from gas and oil activity, heating, agriculture, and waste disposal, the Commission reports.  [Graphic: The Commission expects Norway's greenhouse gas emissions to reach about 70 million tons CO2 equivalent by 2050. Historical and projected annual emissions of greenhouse gases are shown in the Commission’s reference path. The low-emission goal for 2050 is also shown (click to enlarge)]

[Graphic: The Commission expects Norway's greenhouse gas emissions to reach about 70 million tons CO2 equivalent by 2050. Historical and projected annual emissions of greenhouse gases are shown in the Commission’s reference path. The low-emission goal for 2050 is also shown (click to enlarge)]

The Commission's report identifies 15 measures that together will ensure the necessary reduction of Norwegian emissions over the next half-century. The measures are mainly directed at specific and major emissions sources, with the exception of two basic measures that the Commission sees as prerequisites for the realization of the other measures.

Getting to a Low-Carbon Diet - The Commissions General Solution:

Basic measures:

Transportation:

Heating:

Agriculture and waste disposal:

Industrial processes:

Oil and gas activities:

Electricity production: [Graphic: Illustration of the Commission's general solution. Annual emissions of greenhouse gases in the past, in the Commission’s reference path, and in the proposed low-emission path 1990–2050 (click to enlarge)]

[Graphic: Illustration of the Commission's general solution. Annual emissions of greenhouse gases in the past, in the Commission’s reference path, and in the proposed low-emission path 1990–2050 (click to enlarge)]

The Commission’s calculations find that the national costs for their greenhouse gas reduction plan need not be exorbitant, given that the measures are implemented when the need for renovation arises and as long as climate-friendly solutions are chosen systematically in new investments. And investment in education, research, development, and testing of climate-friendly technologies will, under any circumstances, strengthen Norway’s technological expertise, the Commission reports.

First Steps - What Must Be Done Now?

The Commission writes: "Even though exchange of equipment and such will take place at a natural pace, it is important that the necessary political signals are sent and framework conditions are established now to achieve a more climate-friendly development in the time to come."

According to the Commission, in cooperation with industry, energy suppliers and organizations, the Norwegian government and decision-makers must implement the following measures during the current parliamentary term:

A Model for the World?

The Commission's report recognizes that any emissions reductions achieved by Norway will have a small impact on worldwide greenhouse gas emissions. According to the Commission, Norway itself is responsible for less than two-tenths of a percent of the global greenhouse emissions.

However, the Commission recognizes that emissions reduction efforts in Norway can have a significant symbolic effect. The Commission writes"It is reasonable, as stated in the UN Convention on Climate Change, that rich countries pave the way and reduce their emissions of greenhouse gases before countries with social and economic development needs are required to set climate targets. Norway is without doubt one of the countries which, from this perspective, should agree to restrict its emissions."

The policy and technology solutions tried and tested in Norway can serve as models for the rest of the industrialized and developing world as they follow the lead of countries like Norway, Sweden, Iceland and others who have taken proactive and concerted steps to reduce their greenhouse gas emissions.

That's not to say there's nothing in it for Norway either. As the Commission writes:"And finally, the Commission believes that the climate problem will make it necessary and desirable from a selfish perspective to reduce emissions sooner or later. The countries that develop the necessary climate-friendly technology early on will gain a competitive advantage in future industry development, and will thus be able to position themselves favorably in a future market for such technology."

As After Gutenburg points out:[Norway's committment] comes as somewhat of a surprise, since Norway is the largest oil and gas producer among Scandinavian countries and lags behind Denmark, for instance, in the use of wind power, or behind Sweden or Finland in the use of biomass. On the other hand, Reuters reported in June that Norway was establishing a 20 billion Norwegian crowns ($3.24 billion) fund to promote renewable energy such as wind and hydro.

It's also worth noting, that unlike Sweden, Norway's Commission was silent on the issue of expanded use of nuclear power. Swedish policy makers committed to reducing their greenhouse gas emissions (and eliminating their fossil fuel use!) without expanding the use of Nuclear power beyond currently operating plants. A 1980 referendum in Sweden committed the country to phase out it's fleet of nuclear plants as they reached retirement age.

The leadership exhibited by these Scandanavian countries on addressing climate change and greenhouse gas emissions is laudable. The plan outlined by the Commission seems very sensible and ought to be taken note of by policy makers throughout the world.

Let's hope both Sweden and Norway follow through with their plans and succesfully implement several policy and technology solutions that can be adopted by other nations. I hope these two forward-looking countries truly become models for the rest of the world.

Tuesday, October 31, 2006

Norway Going Carbon Lean by 2050

Research Spending Lacking in the Fight Against Global Climate Change

The New York Times carried a fairly in-depth story yesterday highlighting the lack of research dollars - both public and private - flowing into climate friendly energy technology development worldwide.

Despite growing awareness and consensus that action must be taken to address global climate change, the Times reports that research into energy technologies - both government and private spending - has not been rising, but falling. According to the Times, the annual United States federal spending on all energy technologies, not just climate friendly ones, has fallen from an inflation-adjusted peak of $7.7 billion in 1979 to just $3 billion in the current budget.

In contrast, federal spending on medical research has nearly quadrupled since 1979 and military spending has gone up 260% since then to $75 billion a year - over 20 times what is spent on energy research. [Graphic: Public and private energy research spending. Click the image to see how it compares to spending on other areas (Source: NY Times)]

[Graphic: Public and private energy research spending. Click the image to see how it compares to spending on other areas (Source: NY Times)]

According to the Times, President Bush has sought an increase to $4.2 billion for the 2007 energy research budget, but that is still only a small fraction of what most climate and energy experts say would be needed.

Energy and climate experts across the world - including more than four dozen scientists, economists, engineers and entrepreneurs interviewed by Times - are sounding the alarm and warning that unless the search for abundant carbon-free and renewable energy sources becomes far more aggressive, the world is likely to face dangerous warming and international strife as nations with growing energy demands compete for increasingly inadequate resources.

Read on: "Budgets Fallin in the Race to Fight Global Warming", Andrew Revkin (New York Times, October 30, 2006): Cheers fit for a revival meeting swept a hotel ballroom as 1,800 entrepreneurs and experts watched a PowerPoint presentation of the most promising technologies for limiting global warming: solar power, wind, ethanol and other farmed fuels, energy-efficient buildings and fuel-sipping cars.

“Houston,” Charles F. Kutscher, chairman of the Solar 2006 conference, concluded in a twist on the line from Apollo 13, “we have a solution.”

Hold the applause. For all the enthusiasm about alternatives to coal and oil, the challenge of limiting emissions of carbon dioxide, which traps heat, will be immense in a world likely to add 2.5 billion people by midcentury, a host of other experts say. Moreover, most of those people will live in countries like China and India, which are just beginning to enjoy an electrified, air-conditioned mobile society.

The challenge is all the more daunting because research into energy technologies by both government and industry has not been rising, but rather falling.

In the United States, annual federal spending for all energy research and development — not just the research aimed at climate-friendly technologies — is less than half what it was a quarter-century ago. It has sunk to $3 billion a year in the current budget from an inflation-adjusted peak of $7.7 billion in 1979, according to several different studies.

Britain, for one, has sounded a loud alarm about the need for prompt action on the climate issue, including more research. [A report commissioned by the British government and scheduled to be released today calls for spending to be doubled worldwide on research into low-carbon technologies; without it, the report says, coastal flooding and a shortage of drinking water could turn 200 million people into refugees.]

President Bush has sought an increase to $4.2 billion for 2007, but that would still be a small fraction of what most climate and energy experts say would be needed.

Federal spending on medical research, by contrast, has nearly quadrupled, to $28 billion annually, since 1979. Military research has increased 260 percent, and at more than $75 billion a year is 20 times the amount spent on energy research.

Internationally, government energy research trends are little different from those in the United States. Japan is the only economic power that increased research spending in recent decades, with growth focused on efficiency and solar technology, according to the International Energy Agency.

In the private sector, studies show that energy companies have a long tradition of eschewing long-term technology quests because of the lack of short-term payoffs.

Still, more than four dozen scientists, economists, engineers and entrepreneurs interviewed by The New York Times said that unless the search for abundant non-polluting energy sources and systems became far more aggressive, the world would probably face dangerous warming and international strife as nations with growing energy demands compete for increasingly inadequate resources.

Most of these experts also say existing energy alternatives and improvements in energy efficiency are simply not enough.

“We cannot come close to stabilizing temperatures” unless humans, by the end of the century, stop adding more CO2 to the atmosphere than it can absorb, said W. David Montgomery of Charles River Associates, a consulting group, “and that will be an economic impossibility without a major R.& D. investment.”

A sustained push is needed not just to refine, test and deploy known low-carbon technologies, but also to find “energy technologies that don’t have a name yet,” said James A. Edmonds, a chief scientist at the Joint Global Change Research Institute of the University of Maryland and the Energy Department.

At the same time, many energy experts and economists agree on another daunting point: To make any resulting “alternative” energy options the new norm will require attaching a significant cost to the carbon emissions from coal, oil and gas.

“A price incentive stirs people to look at a thousand different things,’ ” said Henry D. Jacoby, a climate and energy expert at the Massachusetts Institute of Technology.

For now, a carbon cap or tax is opposed by President Bush, most American lawmakers and many industries. And there are scant signs of consensus on a long-term successor to the Kyoto Protocol, the first treaty obligating participating industrial countries to cut warming emissions. (The United States has not ratified the pact.)

The next round of talks on Kyoto and an underlying voluntary treaty will take place next month in Nairobi, Kenya.

Environmental campaigners, focused on promptly establishing binding limits on emissions of heat-trapping gases, have tended to play down the need for big investments seeking energy breakthroughs. At the end of “An Inconvenient Truth,” former Vice President Al Gore’s documentary film on climate change, he concluded: “We already know everything we need to know to effectively address this problem.”

While applauding Mr. Gore’s enthusiasm, many energy experts said this stance was counterproductive because there was no way, given global growth in energy demand, that existing technology could avert a doubling or more of atmospheric concentrations of carbon dioxide in this century.

Mr. Gore has since adjusted his stance, saying existing technology is sufficient to start on the path to a stable climate.

Other researchers say the chances of success are so low, unless something breaks the societal impasse, that any technology quest should also include work on increasing the resilience to climate extremes — through actions like developing more drought-tolerant crops — as well as last-ditch climate fixes, like testing ways to block some incoming sunlight to counter warming.

Without big reductions in emissions, the midrange projections of most scenarios envision a rise of 4 degrees or so in this century, four times the warming in the last 100 years. That could, among other effects, produce a disruptive mix of intensified flooding and withering droughts in the world’s prime agricultural regions.

Sir Nicholas Stern, the chief of Britain’s economic service and author of the new government report on climate options, has summarized the cumulative nature of the threat succinctly: “The sting is in the tail.”

The Carbon Dioxide Problem

Many factors intersect to make the prompt addressing of global warming very difficult, experts say.

A central hurdle is that carbon dioxide accumulates in the atmosphere like unpaid credit card debt as long as emissions exceed the rate at which the gas is naturally removed from the atmosphere by the oceans and plants. But the technologies producing the emissions evolve slowly.

A typical new coal-fired power plant, one of the largest sources of emissions, is expected to operate for many decades. About one large coal-burning plant is being commissioned a week, mostly in China.

“We’ve got a $12 trillion capital investment in the world energy economy and a turnover time of 30 to 40 years,” said John P. Holdren, a physicist and climate expert at Harvard University and president of the American Association for the Advancement of Science. “If you want it to look different in 30 or 40 years, you’d better start now.”

Many experts say this means the only way to affordably speed the transition to low-emissions energy is with advances in technologies at all stages of maturity.

Examples include:

Carbon dioxide levels will stabilize only if each generation persists in developing and deploying alternatives to unfettered fossil-fuel emissions, said Robert H. Socolow, a physicist and co-director of a Princeton “carbon mitigation initiative” created with $20 million from BP and Ford Motor.

The most immediate gains could come simply by increasing energy efficiency. If efficiency gains in transportation, buildings, power transmission and other areas were doubled from the longstanding rate of 1 percent per year to 2 percent, Dr. Holdren wrote in the M.I.T. journal Innovations earlier this year, that could hold the amount of new nonpolluting energy required by 2100 to the amount derived from fossil fuels in 2000 —a huge challenge, but not impossible.

Another area requiring immediate intensified work, Dr. Holdren and other experts say, is large-scale demonstration of systems for capturing carbon dioxide from coal burning before too many old-style plants are built.

All of the components for capturing carbon dioxide and disposing of it underground are already in use, particularly in oil fields, where pressurized carbon dioxide is used to drive the last dregs of oil from the ground.

In this area, said David Keith, an energy expert at the University of Calgary, “We just need to build the damn things on a billion-dollar scale.”

In the United States, the biggest effort along these lines is the 285-megawatt Futuregen power plant planned by the Energy Department, along with private and international partners, that was announced in 2003 by President Bush and is scheduled to be built in either Illinois or Texas by 2012. James L. Connaughton, the chairman of the White House Council on Environmental Quality, said the Bush administration was making this a high priority.

“We share the view that a significantly more aggressive agenda on carbon capture and storage and zero-pollution coal is necessary,” he said, adding that the administration has raised annual spending on storage options “from essentially zero to over $70 million.”

Europe is pursuing a suite of such plants, including one in China, but also well behind the necessary pace, several experts said.

Even within the Energy Department, some experts are voicing frustration over the pace of such programs. “What I don’t like about Futuregen,” said Dr. Kutscher, an engineer at the National Renewable Energy Laboratory in Golden, Colo., “is the word ‘future’ in there.”

Beyond a Holding Action

No matter what happens in the next decade or so, many experts say, the second and probably hardest phase of stabilizing the level of carbon dioxide will fall to the generation of engineers and entrepreneurs now in diapers, and the one after that. And those innovators will not have much to build on without greatly increased investment now in basic research.

There is plenty of ferment. Current research ranges from work on algae strains that can turn sunlight into hydrogen fuel to the inkjet-style printing of photovoltaic cells — a technique that could greatly cut solar-energy costs if it worked on a large scale. One company is promoting high-flying kite-like windmills to harvest the boundless energy in the jet stream.

But all of the small-scale experimentation will never move into the energy marketplace without a much bigger push not only for research and development, but for the lesser-known steps known as demonstration and deployment.

In this arena, there is a vital role for government spending, many experts agree, particularly on “enabling technologies” — innovations that would never be pursued by private industry because they mainly amount to a public good, not a potential source of profit, said Christopher Green, an economist at McGill University.

Examples include refining ways to securely handle radioactive waste from nuclear reactors; testing repositories for carbon dioxide captured at power plants; and, perhaps more important, improving the electricity grid so that it can manage large flows from intermittent sources like windmills and solar panels.

“Without storage possibilities on a large scale,” Mr. Green said, “solar and wind will be relegated to niche status.”

While private investors and entrepreneurs are jumping into alternative energy projects, they cannot be counted on to solve such problems, economists say, because even the most aggressive venture capitalists want a big payback within five years.

Many scientists say the only real long-term prospect for significantly substituting for fossil fuels is a breakthrough in harvesting solar power. This has been understood since the days of Thomas Edison. In a conversation with Henry Ford and the tire tycoon Harvey Firestone in 1931, shortly before Edison died, he said: “I’d put my money on the sun and solar energy. What a source of power! I hope we don’t have to wait until oil and coal run out before we tackle that.”

California, following models set in Japan and Germany, is trying to help solar energy with various incentives.

But such initiatives mainly pull existing technologies into the market, experts say, and do little to propel private research toward the next big advances.

The Role of Leadership

At the federal level, the Bush administration was criticized by Republican and Democratic lawmakers at several recent hearings on climate change.

Mr. Connaughton, the lead White House official on the environment, said most critics are not aware of how much has been done.

“This administration has developed the most sophisticated and carefully considered strategic plan for advancing the technologies that are a necessary part of the climate solution,” he said. He added that the administration must weigh tradeoffs with other pressing demands like health care.

Since 2001, when Mr. Bush abandoned a campaign pledge to limit carbon dioxide from power plants, he has said that too little is known about specific dangers of global warming to justify hard targets or mandatory curbs for the gas.

He has also asserted that any solution will lie less in regulation than in innovation.

“My answer to the energy question also is an answer to how you deal with the greenhouse-gas issue, and that is new technologies will change how we live,” he said in May.

But critics, including some Republican lawmakers, now say that mounting evidence for risks — including findings that administration officials have tried to suppress of late — justifies prompt, more aggressive action to pay for or spur research and speed the movement of climate-friendly energy options into the marketplace.

Martin I. Hoffert, an emeritus professor of physics at New York University, said that what was needed was for a leader to articulate the energy challenge as President John F. Kennedy made his case for the mission to the moon. President Kennedy said his space goals were imperative, “not because they are easy, but because they are hard.”

In a report on competitiveness and research released last year, the National Academies, the country’s top science advisory body, urged the government to substantially expand spending on long-term basic research, particularly on energy.

The report, titled “Rising Above the Gathering Storm,” recommended that the Energy Department create a research-financing body similar to the 48-year-old Defense Advanced Research Projects Agency, or Darpa, to make grants and attack a variety of energy questions, including climate change.

Darpa, created after the Soviet Union launched Sputnik in 1957, was set up outside the sway of Congress to provide advances in areas like weapons, surveillance and defensive systems. But it also produced technologies like the Internet and the global positioning system for navigation.

Mr. Connaughton said it would be premature to conclude that a new agency was needed for energy innovation.

But many experts, from oil-industry officials to ecologists, agree that the status quo for energy research will not suffice.

The benefits of an intensified energy quest would go far beyond cutting the risks of dangerous climate change, said Roger H. Bezdek, an economist at Management Information Systems, a consulting group.

The world economy, he said, is facing two simultaneous energy challenges beyond global warming: the end of relatively cheap and easy oil, and the explosive demand for fuel in developing countries.

Advanced research should be diversified like an investment portfolio, he said. “The big payoff comes from a small number of very large winners,” he said. “Unfortunately, we cannot pick the winners in advance.”

Ultimately, a big increase in government spending on basic energy research will happen only if scientists can persuade the public and politicians that it is an essential hedge against potential calamity.

That may be the biggest hurdle of all, given the unfamiliar nature of the slowly building problem — the antithesis of epochal events like Pearl Harbor, Sputnik and 9/11 that triggered sweeping enterprises.

“We’re good at rushing in with white hats,” said Bobi Garrett, associate director of planning and technology management at the National Renewable Energy Laboratory. “This is not a problem where you can do that.”

Monday, October 30, 2006

News From My Backyard: TriMet Switches Entire Fleet to B5 Biodiesel Blend

TriMet, Portland, Oregon's metro area mass transit authority, is expanding the use of biodiesel amongst its bus fleet. According to the Oregonian, the transit energy will put a 5 percent biodiesel blend in all 611 of its buses, helping to reduce the area's harmful exhaust emissions.

TriMet, Portland, Oregon's metro area mass transit authority, is expanding the use of biodiesel amongst its bus fleet. According to the Oregonian, the transit energy will put a 5 percent biodiesel blend in all 611 of its buses, helping to reduce the area's harmful exhaust emissions.

The first truckloads of the B5 biodiesel blend will be delivered to TriMet on Monday by Carson Oil Co, the Oregonian reports.

"I think it's a huge step forward," said Jeff Rouse, alternative fuels manager for Carson Oil. "This is a pivotal point in TriMet's relationship with alternative fuels."

TriMet's move is just one of several other recent efforts by the City of Portland and TriMet to boost biofuels and tackle the environmental effects of diesel vehicles [see previous posts here, here and here].

TriMet will use an estimated 327,000 gallons of biodiesel a year, more than the state's next three biggest biodiesel users combined, a spokeswoman, Mary Fetsch, told the Oregonian.

Biodiesel is produced from plant oils, used cooking oils and waste animal fats. The B5 fuel will consist of 5 percent biodiesel and 95 percent petroleum diesel.

A handful of other transit agencies have been more aggressive than TriMet in switching to biodiesel, the Oregonian reports. Buses in St. Louis and Cincinnati burn B20 - a 20 percent blend, and this year, the Central Ohio Transit Authority began using a 90 percent blend in its buses.

The Oregonian reports that TriMet is sticking with B5 for now because the agency's engine manufacturers will only warranty their engines for use with the 5 percent blend. "The industry is moving toward that," Fetch said. "We hope to see an increased level of allowable biodiesel in the next year."

"It will make a difference," to air quality in the Portland area, said Kevin Downing, clean diesel program coordinator for the state Department of Environmental Quality. A 5 percent biodiesel blend cuts particulate emissions by about 1 percent, Downing said. The higher the blend, the more it benefits air quality.

"The real strength in biodiesel is not so much on the air quality side," he said "It is in renewability, the global warming benefits, and the fact that you're not going to the Middle East, you're going to the Midwest."

During the past year, TriMet tested B5 biodiesel in its fleet of LIFT buses that serve people with disabilities and the elderly [see previous post]. Agency officials had concerns about the fuel gelling in cold weather but Fetsch told the Oregonian that the tests showed no problems.

The Central Ohio Transit Authority addresses cold weather concerns by using a 90 percent blend during the warmer months, switching to 50 percent in October, and 20 percent in December.

As required by federal law, TriMet began using ultra-low sulfur diesel this month [see previous post], which cuts the sulfur content by 97 percent.

The transit agency announcement is the latest in a series of recent biodiesel developments rapidly earning Portland a reputation as a major national center for biodiesel consumption. The Portland City Council adopted an ordinance that will require gas stations to sell the 5 percent biodiesel blend next year, and the city's Water Bureau vehicles switched to B99 -- or 99 percent biodiesel blend last month [previous post].

The TriMet contract makes Carson the state's largest biodiesel distributor. "It allows us to continue to invest in growing the industry," Rouse said. TriMet's decision and the city's recent actions help build demand for biodiesel, he said.

Rouse said the city's requirement that gas stations sell biodiesel will send a message to motorists that it's OK to burn the fuel in their cars.

"And it's a matter of civic pride," he said. "All of a sudden you realize you're replacing 5 percent of the fuel you use with a renewable resource, and you're not contributing to foreign oil issues. It's a great American story."

[A hat tip to the Oregonian and Green Car Congress]

News From My Backyard: Washington Wave Energy Pilot Project Completes Environmental Review

[From Renewable Energy Access.com:]

[From Renewable Energy Access.com:]

The Makah Bay Offshore Wave Energy Pilot Project recently completed the Preliminary Draft Environmental Assessment (PDEA) process. The project, which is being developed through Finavera Renewables' wave energy division and subsidiary AquaEnergy Group Ltd., is expected to deliver 1,500 megawatt hours annually to the Clallam County Public Utility's grid in Washington state by the end of 2006.

The PDEA, completed by a Federal Energy Regulatory Commission (FERC) qualified assessor, concluded the project would have "no significant environmental effects" on the oceanographic, geophysical and biological conditions of the Makah Bay.

"The successful installation of the proposed offshore energy power plant will herald the beginning of a new renewable energy industry sector, bringing ocean energy one step closer towards generation of clean, competitively priced electricity to commercial and residential consumers in Washington state and other coastal U.S. states," said Alla Weinstein, CEO AquaEnergy and the first President of the European Ocean Energy Association.

The AquaEnergy offshore plant consists of patented wave energy converters, AquaBuOYs, based on heaving-buoy point absorber and hose-pump technologies. The mechanical portion of the Makah Bay pilot power plant will consist of four low-profile moored buoys placed 3.2 nautical miles offshore in water depths of 150-250 feet, to transform wave energy into usable electrical energy.

Since the project inception in 2001, AquaEnergy conducted meetings with environmental groups, fisherman's associations, and commercial and recreational users of Makah Bay. A consortium formed for the project includes the Makah Indian Nation, Clallam County Public Utility District (PUD), Washington State University, Bonneville Power Administration through the Northwest Energy Innovation Center, and the Clallam County Economic Development Council.

"The Makah Tribe has interest in using energy derived from renewable resources. The Makah Nation chose to partner in this project due to the environmental integrity and low impact of AquaEnergy's offshore buoy technology," said Ben Johnson Jr., Makah Tribal Council Chairman.

Several other projects in California and Oregon may soon be converting the ocean's energy into electricity as well. In San Francisco, California, Mayor Gavin Newsom recently announced the city will explore the possibility of generating power from the tidal flow under the Golden Gate Bridge.

In late September, the City of San Francisco launched a $150,000 feasibility study to examine the tidal energy project, which could generate up to 35 megawatts (MW) of power, according to the Electric Power Research Institute and the San Francisco Public Utilities Commission. The feasibility study should be completed in late 2007 or early 2008.

The Oregon wave energy project is further along, as Ocean Power Technologies (OPT) has already received a preliminary permit from the FERC to develop a project off the coast of Reedsport, which is southwest of Eugene.

When OPT applied for the permit in July, the company said it initially plans to install a 2-megawatt (MW) wave power project about 2.5 miles off the coast, where the ocean depth is about 55 yards. OPT plans to eventually scale-up the plant to 50 MW.

[Update: 11/13/06] The Makah Bay wave energy demo project has now formally entered the Federal Energy Regulatory Commission's licensing process, according to a November 3rd announcement from AquaEnergy group (as reported in the 11/13/06 edition of Clearing Up energy newsletter.

AquaEnergy's application will be considered under FERC's alternative licensing process, or ALP, which includes prefiling consultation and environmental reviews under the National Environmental Protection Act. This is the first wave energy project to take the ALP path. AquaEnergy reportedly eschewed the traditional FERC licensing process, which could take several years, as well as a preliminary permit which would only serve as a 'placeholder conferring no particular advantage to the company. The ALP path could be completed within a couple of years, and the plant could be built in 12 to 18 months, AquaEnergy's CEO, Alla Weinstein told Clearing Up.

Along with the license application, AquaEnergy filed the PDEA discussed in the post above.

According to Clearing Up, Clallum County PUD has agreed (back in 2003) to purchase power from the Makah Bay wave energy plant at 4 cents per kilowatt-hour over three years. That low price - competitive with current prices for coal and wind generation - indicates that either AquaEnergy's AquaBuOY device can already generate electricity at competitive rate, or that AquaEnergy plans to take a loss, or seek other sources of subsidies and revenue, for this first wave energy project.

Ocean energy - wave and tidal power - keeps gathering more and more momentum on the West Coast these days. There seems to be consitent press coverage of something ocean energy-related each week.

The Makah Bay project has been in the works the longest of the proposed North American wave energy facilities and looks to be the first to get in the water and hooked up to the grid as well. It will be invaluable to have a project on the West Coast in the water to generate some experience and real data on environmental impacts, ocean conditions, operating experience, etc.

The Ocean Power Technologies Reedsport project in Oregon looks to be following a bit behind the Makah Bay project and OPT has plans to expand their initial 2 MW project to a full commercial-scale 50 MW facility [see previous post].

I'll keep covering any new developments on these and other West Coast wave and tidal energy projects as they come up...

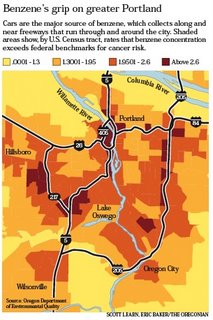

News From My Backyard: Portland's Air Thick With Toxic Chemical From Car Exhaust

The Oregonian ran this alarming article today that ought to concern any Portland, Oregon-area resident.

The Oregonian ran this alarming article today that ought to concern any Portland, Oregon-area resident.

Apparently, Portland's airshed contains dangerous levels of the toxic and carcinogenic chemical benzene due to high benzene concentrations in the gasoline burned by Oregonian's cars, trucks and SUVs. Benzene, a potent chemical that causes cancer and blood disorders, is found in the air around many major cities with lots of vehicles. But the high benzene levels in Oregon gasoline means that Portland's airshed has much higher concentrations of the dangerous chemical than would be expected for a city of it's size - according to the article, Portland's benzene levels are roughly the same as those found in the air over the Bronx borough of New York City, for example!

And Portland isn't alone. According to the article, gasoline in Oregon and Washington appear to have the highest benzene levels within the lower 48 states. Benzene levels in and around Seattle, Tacoma and other urban areas in Washington are also presumably higher than other cities of their size and are likely at or near dangerous levels. Benzene levels in Oregon and Washington gasoline are twice as high as the national average, and three times higher than in California, the article reports.

This is a real public health concern that should be addressed. Clearly there is no reason why Oregon or Washington's gasoline standards should not include the stricter standards for benzene found in many other states, including our neighbor to the south, California.

The EPA has proposed new 'cap-and-trade' regulation for benzene that would set a national cap on refineries starting in 2011 - and would likely preclude any state action - but the EPA predicts that refineries in the Northwest would rely on the trading system to purchase credits from refineries in other regions to avoid making improvements to reduce benzene in their gasoline. Although benzene levels in Northwest gasoline would drop, they would remain the highest in the nation, the EPA predicts.

The Oregon DEQ has tried to block the new trading system saying that it puts Oregonians "needlessly at risk." A weak federal law would block states from enacting stricter legislation and the Oregon DEQ argues it's better to have no federal law at all, leaving it to states like Oregon to regulate their own benzene levels. The EPA is now considering comments and is expected to release a final version of the new regulations in coming months.

Read on: "There's danger in the air", by Michael Milstein (The Oregonian, October 30th, 2006):On sunny days, families picnic in Tom McCall Waterfront Park, joggers trot by and teenagers chat on cell phones.

[A hat tip to Natalie McIntire]

You wouldn't know it from the crisp view of a shining Mount Hood, but they're all breathing a soup of toxic air. It's especially full of an invisible but dangerous offender: benzene, spewed from tailpipes of cars on highways ringing the city.

Benzene, a potent chemical that causes cancer and blood disorders, is not unusual in major cities with lots of vehicles. But in Portland, it's worse.

That's because the gasoline we put in our cars, pickups and SUVs is dirtier.

It holds nearly twice as much benzene as the national average and three times as much as gasoline in California, where strict limits make its gasoline the cleanest. Parts of Portland last year recorded benzene levels about as high as the Bronx borough of New York City -- in some neighborhoods many times above levels considered healthy for long-term exposure.

The reason? The federal government requires cleaner gasoline elsewhere -- it makes no such requirement in the Northwest because our skies are considered too clean to trigger regulation. So vehicles in Oregon and Washington, fueled by gasoline made from benzene-rich Alaskan oil, vent about 50 percent more toxic compounds into the air per mile than cars in East Coast and Southern states, data from the U.S. Environmental Protection Agency show.

The skies above humming freeways such as Interstate 5, I-84 and I-405 flow with hidden rivers of these chemicals. Those rivers surge into Portland's urban core, filling downtown, residential neighborhoods and industrial zones like soup in a bowl.

Although the EPA now proposes new rules to reduce benzene in gasoline nationally, it would allow gasoline here to remain the dirtiest in the country and pre-empt Oregon from adopting tougher limits of its own -- because states usually cannot override a federal rule.

State and local air quality agencies are fighting the approach, saying it leaves people here at unacceptably high risk.

Soaring cancer risks

Assessing the risk is tricky. The more benzene in gasoline, the more ends up in the air. Although the risk of developing cancer from a lifetime of breathing benzene remains slim in Multnomah County -- about 26 in a million, far less than the risk from smoking -- it's more than twice the national average, according to federal data.

"The higher the benzene, the worse off you are," said Dave Nordberg, a transportation specialist with the Oregon Department of Environmental Quality.

For people in parts of the city laden with toxic air, however, the risk runs higher. A new analysis by the DEQ, which examined the way bad air swirls through the city, shows benzene concentrations in parts of downtown and the Pearl District at more than 200 times levels considered safe for people breathing it throughout their lives.

The analysis was based on pollution data from 1999. But some monitors in Portland last year showed as much benzene in the air as monitors in the Bronx.

Until now, Oregon and federal air authorities focused mainly on pollution that contributes to smog. Some basic measures -- such as the accordion-shaped nozzles on gas pumps -- capture benzene that might otherwise evaporate into the air. But agencies have recently turned much more attention to invisible but harmful compounds such as benzene, setting benchmarks and studying their levels in local air.

About two-thirds of benzene in downtown Portland comes from tailpipes of vehicles -- a much larger source of toxic air compounds than industrial smokestacks, the DEQ has found.

It's worst close to major highways: Benzene levels measure 10 times higher within 50 meters of a road than 400 meters away.

The DEQ's goal is for no more than one person in a million to get cancer from breathing the air, environmental officials say. But typical Portlanders breathing local air throughout their life run a risk of cancer 66 times higher.

Breathing the worst air in the city? Your risk runs 350 in a million, according to the DEQ results.

The average Portland resident is almost 10,000 times more likely to get cancer breathing local air than to win the jackpot in Powerball, DEQ figures show.

Benzene, which causes genetic damage and immune system and blood disorders, contributes almost a quarter of the cancer risk. It's the largest contributor of any toxic compound the DEQ examined.

But it's only one of many toxins exhaled by cars. The next major contributor to cancer risk is the fine particles in diesel exhaust, a prime cause of lung cancer. Others include formaldehyde and butadiene, which cause respiratory disorders, cancers and immune system problems.

"Just the number of cars we have overwhelms everything else," Russell said. "Even our biggest industrial sources are dwarfed by what comes from cars."

The automotive population of the Portland metropolitan area is growing faster than its human population.

There are 27 percent more cars now in Clackamas, Multnomah and Washington counties than 10 years ago, according to Oregon Department of Transportation figures.

Cars have gotten much cleaner over the decades, and new tailpipe emissions standards pushed by Gov. Ted Kulongoski will help, state officials say. But "the problem is that our car population has skyrocketed," said Monica Russell of the DEQ's air quality program. "So all the gains we made have been offset."

They have also been undercut by our dirtier gasoline.

The credit conundrum

Oregon gets 90 percent of its gasoline from refineries in Washington, and more than 80 percent of that comes from the North Slope of Alaska, according to the Oregon Department of Energy. The oil may have 10 times as much benzene in it as oil from other regions, according to the EPA.

Refineries can remove benzene from gasoline, and they do in polluted parts of the country where the government requires it. But that does not include the Northwest.

Also, refineries that remove benzene sometimes sell it to chemical companies that use it in the manufacture of other compounds. But there is not a large enough chemical industry in the Northwest to create much appetite for benzene here, Nordberg said.

"It makes it more expensive to take it out of our gasoline than other gasoline," he said.

The BP Cherry Point refinery, the largest in Washington, installed a new $115 million unit in 2003 mainly to reduce sulfur levels in its gasoline. But it has the dual benefit of eliminating most benzene. The refinery, which supplies ARCO stations in the Northwest, now produces gasoline with less than half the average benzene levels in the region, said spokesman Mike Abendhoff.

The EPA does not reveal levels of benzene in gasoline from individual refineries, saying it's confidential business information. It releases benzene levels only by groups of states, and it lumps Oregon and Washington with Nevada, Arizona, Alaska and Hawaii.

Gasoline in those states has more benzene -- and burning it puts more toxins in the air -- than gas anywhere else in the country, based on refinery data from 2003.

Oregon and Washington appear to have the highest benzene levels within the lower 48 states, based on interviews with state officials and refinery operators. Arizona, for example, gets its gasoline from different sources and has tighter regulations, so its benzene levels are lower.

Nobody decided the Northwest would end up with more benzene -- it just turned out that way. The Clean Air Act began to clamp down on toxic air pollution in the 1990s, but focused first on the most populated, polluted regions, said EPA spokesman John Millett. That left the Northwest out.

Pressured by lawsuits, the EPA proposed a new national rule limiting benzene in gasoline starting in 2011. But the limit would be a national average -- some refineries could produce gasoline with more, and others with less.

Refineries get credits if they produce gasoline with less benzene. Other refineries could buy credits instead of upgrading facilities to make cleaner gasoline.

The EPA is now considering comments and is expected to release a final version in coming months.

The trading system is intended to give refineries flexibility to make improvements where it's most cost-effective.

But the EPA predicts that refineries in the Northwest would rely on the trading system to avoid making as many improvements. Although benzene levels in Northwest gasoline would drop, they would remain the highest in the nation, the EPA predicts.

"It makes good sense until you realize we're the guys who aren't getting the levels down," Nordberg said.

But Al Mannato of the American Petroleum Institute said the Northwest could actually benefit more than much of the country. That's because the region's benzene levels are so high now, they would be reduced by a larger percentage than those elsewhere.

"The areas that are a little higher are still getting benzene levels much lower than they would without this," he said.

He also said many refineries have already reduced benzene output in the past few years. More intensive processing to reduce benzene shrinks the gasoline supply because less gas is produced.

But the Oregon DEQ tried to shoot down the trading system. Air Quality Administrator Andrew Ginsburg wrote to the EPA that its approach "puts Oregon citizens needlessly at risk" and leaves the Pacific Northwest with benzene levels twice as high as some other states.

He said the EPA should require refiners everywhere to reduce benzene to the greatest extent possible.

"By setting up a trading program, they've perpetuated the problem," he said.

The weak federal standard also puts the state at a disadvantage, by blocking the state from enacting its own rule, he said.

"Instead of making a weak rule," he said, "it's better that they make no rule."

Wednesday, October 25, 2006

With Leaders Like These, What Do We Have to Fear? - Secretary of Energy Appoints Former Exxon CEO to Craft National Energy Policies

While I can't find confirmation of this in the news, Daniel Sweeney of Charge (blog) reports that this week, Secretary of Energy Samuel Bodman has appointed former Exxon-Mobil CEO, Lee Raymond, to head a group developing national energy policy initiatives.

While I can't find confirmation of this in the news, Daniel Sweeney of Charge (blog) reports that this week, Secretary of Energy Samuel Bodman has appointed former Exxon-Mobil CEO, Lee Raymond, to head a group developing national energy policy initiatives.

As Sweeney points out:

"[This is the guy] who during his tenure at the oil giant funded pseudo-scientific research purporting to disprove the existence of global warming and scoffed at the notion of lessening foreign oil dependence through the promotion of alternative energy sources. Exxon-Mobil has been virtually alone among the biggest international petroleum companies in its failure to diversify into alternative energy in one form or another, and also has been unusual in its stated insistence that global climate change studies are “junk science”.Well I for one am extremely comforted to know that a man with such unflinching confidence in his positions, a man willing to scoff at the vast array of scientific evidence and opinion opposing him, a man with clear vision for where to lead this country during our modern energy crisis is now helping to craft national energy policy strategies. With leaders like these, we've got nothing to fear, right?!

Exxon-Mobil under Raymond’s leadership also issued statements to the effect that current conventional oil reserves total in excess of 3.1 trillion barrels, almost double the median number cited by leading oil analysts, and suggested that oil producers would increase production to over 50% above current levels. Exxon also dismissed the conventional wisdom to the effect that almost all oil producers have already passed the peak of production. Scores of countries would be boosting production in the future according to Exxon’s top management."

All sarcasm aside, I am simply amazed at this appointment. First of all, I thought we had already bid a fond farewell to Mr. Raymond, but the man just won't stay down. Second, I can't imagine how Bush plans to reconcile the consistent positions of Mr. Raymond and Exxon-Mobile (at least he's been consistent, you've got to give him that) with Mr. Bush's recent (empty?) rhetoric about America's addiction to oil and talk about alternative fuels.

How do you square that circle, Mr. Bush?

Of course, this is just another indication of where Bush's true intentions and loyalties lie. Don't worry folks, Bush, Raymond and Co. will lead the way...

[An obvious hat tip to Mr Sweeney at Charge]

Read more!

A Reminder of Why a Sustainable Energy Future is Critical: Global Ecosystems Face Collapse

Current global consumption levels could result in a large-scale ecosystem collapse by the middle of the century, a recent report from the World Wildlife Fund (WWF) warns.

Current global consumption levels could result in a large-scale ecosystem collapse by the middle of the century, a recent report from the World Wildlife Fund (WWF) warns.

The environmental group's biannual Living Planet Report concludes that the natural world is being degraded "at a rate unprecedented in human history." Terrestrial species have declined by 31% between 1970-2003, the findings showed and the report warns that if demand continues to grow at current rates, two planets would be needed to meet global demand by 2050.

The report should serve as an excellent reminder of why a transition to a sustainable energy future is absolutely critical.

According to the report, the massive and rapid loss of biodiversity was a result of resources being consumed faster than the planet could replace them. The report's authors added that if the world's population shared the UK's lifestyle, three planets would be needed to support their needs (and it would presumably be worse if the world's population were to adopt the American lifestyle).

The nations that were shown to have the largest "ecological footprints" were the United Arab Emirates, the United States and Finland, according to the report.

Paul King, WWF director of campaigns, told the BBC that the world was running up a "serious ecological debt." "It is time to make some vital choices to enable people to enjoy a one planet lifestyle," he said, indicating that serious chances in resource consumption patterns need to occur in order for the world's population to 'live within our means.'

"The cities, power plants and homes we build today will either lock society into damaging over-consumption beyond our lifetimes, or begin to propel this and future generations towards sustainable one planet living," King said.

The report, compiled by the Zoological Society of London (ZSL) and the Global Footprint Network, is based on data from two indicators:

The Living Planet Index tracked the population of 1,313 vertebrate species of fish, amphibians, reptiles, birds and mammals from around the world, according to the BBC. It found that these species have declined by about 30% since 1970, suggesting that natural ecosystems are being degraded at an unprecedented rate.

According to the BBC, the Ecological Footprint Index measured the amount of biologically productive land and water needed to meet the demand for food, timber and shelter, and to absorb the pollution and waste from human activity. The report concluded that the worldwide global footprint exceeded the earth's biocapacity by 25% in 2003, which means that the Earth can no longer keep up with the demands being placed upon it. The findings echo a study published earlier this month that said the world went into "ecological debt" on October 9, 2006. That study, published by UK-based think-tank New Economics Foundation (Nef), was based on the same Ecological Footprint data compiled by the Global Footprint Network, which also provided the figures for this latest report from the WWF.

The findings echo a study published earlier this month that said the world went into "ecological debt" on October 9, 2006. That study, published by UK-based think-tank New Economics Foundation (Nef), was based on the same Ecological Footprint data compiled by the Global Footprint Network, which also provided the figures for this latest report from the WWF.

'Large-scale collapse'

One of the report's editors, Jonathan Loh from the Zoological Society of London, told the BBC: "[It] is a stark indication of the rapid and ongoing loss of biodiversity worldwide. "Populations of species in terrestrial, marine and freshwater ecosystems have declined by more than 30% since 1970," he added. "In the tropics the declines are even more dramatic, as natural resources are being intensively exploited for human use."

The report outlined five scenarios based on the data from the two indicators, ranging from 'business as usual' to 'transition to a sustainable society.'

Under the 'business as usual' scenario, the authors projected that to resources needed to meet the demand in 2050 would be twice as much as what the Earth could provide. The authors warned: "At this level of ecological deficit, exhaustion of ecological assets and large-scale ecosystem collapse become increasingly likely."

To deliver a shift towards a "sustainable society" scenario would require "significant action now" on issues such as energy generation, transport and housing.

The latest Living Planet Report is the sixth in a series of publications which began in 1998.

Energy Use a Main Culprit

As the graph below indicates, carbon dioxide emissions from the combustion of fossil fuels are a major contributor to the Environmental Fooprint Index scores of many overconsuming nations. Radioactive wastes from nuclear energy also contribute to the scores of many nations.

The inclusion of these factors in the index reflects the common sense notion that the widespread use of non-renewable energy resources that produce pollutants, greenhouse gas emissions, and/or long-lived radioactive wastes is not compatible with a 'one world' lifestyle.

If we want to live within our means on the only planet that we've got, we must strive to rapidly transition to a more sustainable energy future, one that relies on renewable resources and produces little or no pollutants, greenhouse gas emissions or radioactive wastes. A sustainable energy infrastructure must be a critical part of any strategy that aims to bring consumption patters back within levels that can be supported by the planet.

Ecological Debtors and Creditors

The following maps illustrate the fact that current consumption patters represent an entirely unjust case of international inequity. Many of the world's developed and rapidly developing nations are in 'ecological debt', consuming far more resources per capita than could be supported if everyone on this planet were to consume at that level. Thus, the less developed nations of the world are currently 'financing the ecological debts' of the more developed countries, a situation that is clearly unjust and unfair. But it is also clear that the planet cannot support a global population that consumes resources the way the average American or Western European does. We simply don't have two or three planets to spare!

But it is also clear that the planet cannot support a global population that consumes resources the way the average American or Western European does. We simply don't have two or three planets to spare!

Thus, as with the efforts to reduce greenhouse gas emissions, the responsibilty to bring our environmental footprint back to sustainable levels lies with the developed countries. We must transition to sustainable consumption patterns and drastically reduce our environmental footprint.

This is it!

We only have one planet Earth.

This is it, our only home, and we're using it up.

It's time to learn how to live within our means!

[A hat tip to Jonathan Dunn and the BBC]

Wednesday, October 18, 2006

News From My Backyard: Oregon Gets National Press for Wave Energy Development Efforts

Oregon's efforts to develop the first utility-scale wave energy facility in North America and become the capital of the nascent wave energy industry got some national press coverage this week. MSNB devoted some ink to Oregon wave energy efforts in an article on Monday.

Oregon's efforts to develop the first utility-scale wave energy facility in North America and become the capital of the nascent wave energy industry got some national press coverage this week. MSNB devoted some ink to Oregon wave energy efforts in an article on Monday.

In July, Ocean Power Technologies (OPT), known for its PowerBuoy wave energy device, filed an application for construction permission to the U.S. Federal Energy Regulatory Commission (FERC) for a 50-megawatt (MW) wave power generation project in Oregon, the first request in the U.S. for such a power project on a utility-scale level [see previous post].

OPT plans to initially install a 2 MW pilot-scale project 2.5 miles off the coast of Reedsport, Oregon. Approval for the full-scale 50 MW wave power plant will follow completion of the initial 2 MW program.

Meanwhile, the Oregon Department of Energy and Oregon State University, with strong backing from Governor Ted Kulongoski, are seeking funding for a national wave energy research facility on the Oregon coast, in and around Newport, Oregon [see previous post]. Governor Kulongoski has repeatedly stated that he plans to make Oregon the wave energy capital of North America, if not the world.

Oregon's efforts haven't gone unnoticed, it appears, as this MSNBC/Associate Press article indicates:Wave energy buoys proposed for the Oregon coast could generate enough electricity to power about 2,000 homes, supporters say.

I've been remiss and haven't been keeping tabs over at Treehugger recently, but it looks like they have run a number of posts on ocean energy which are worth checking out:

Ocean Power Technologies has installed smaller, single test buoys in Hawaii and New Jersey. But the larger buoys proposed for Oregon would be arrayed in four rows of 50 for a total of 200, requiring about 1.5 square miles of ocean, according to company consultant Steve Kopf.

"Nobody's ever done this before," Port of Umpqua Commissioner Keith Tymchuk said during a recent hearing on the proposal. "Nowhere in the United States has there been a project like this permitted before."

The meeting was part of the requirements for a Federal Energy Regulatory Commission license application by Ocean Power.

The port and other Reedsport and Douglas County officials and state agencies have been working with wave energy companies and Oregon State University for more than a year to develop a buoy park off the coast at Gardiner.

One of the key advantages of the Gardiner site is the former International Paper mill site that has an effluent pipe that stretches underground to the ocean and an electricity substation already on site.

More importantly, Kopf said, is that the easements for that pipe already are in place.

Oregon isn't the only state seeking renewable energy sources. California also is seeking alternative energy projects, Kopf said, but Oregon, and Reedsport in particular, has several advantages over California.

The abundance of waves is excellent, Oregon offers more financial incentives and, most importantly, there was significant momentum and public support for the project, Kopf said.

"We're here because we think Oregon and specifically this part of the coast, wants this type of project," Kopf said.

Gov. Ted Kulongoski also supports the project. He has organized a team to help streamline the licensing and development process, headed by Tymchuk and state Sen. Joanne Verger, D-Coos Bay.

Kopf said there has been some concern about the loss of fishing area in the wave park.

"But we're trying to find a balance," said Oregon Department of Energy spokesman Justin Klure.

Happy reading!

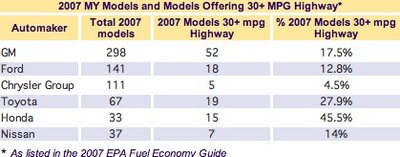

Who's the Leader in Fuel Efficient Vehicles: GM or Honda?

Green Car Congress (GCC) has an excellent post today examining the claims made by General Motors (GM) that it "leads the industry with more vehicles that achieve 30 mpg, on the highway, than any other manufacturer.”

GM used the recent release of the 2007 Environmental Protection Agency (EPA) Fuel Economy Guide to publicly make these claims. GCC points out that while their claims are true from the frame of reference of an absolute number of models offered, GM also sells more models than any other automaker. The 2007 Fuel Economy Guide lists 298 separate GM models (including different powertrain options for a given model). Fifty-two of those obtain 30 mpg or better on the highway, or 17.45% of total models offered.

However, as GCC goes on to elaborate, more than 18% of all models sold in the US (as listed in the EPA Fuel Economy Guide) deliver 30 mpg or better on the highway (193 models out of 1,058). And on the basis of 30+ mpg highway models as a percentage of all models sold, while GM is not the lowest of the top six automakers (that falls to the Chrysler Group), neither is it the leader (see graphic below). [Graphic: Total 2007 models, models with >30 mpg highway, and percentage of total for the top six automakers. Click to enlarge. Source: Green Car Congress]

[Graphic: Total 2007 models, models with >30 mpg highway, and percentage of total for the top six automakers. Click to enlarge. Source: Green Car Congress]

When you look at percentage of sales, rather than absolute numbers, Honda takes the top spot for most fuel efficient auto manufacturer, with 45.5% of its 2007 models offered delivering 30 miles per gallon or better on the highway.

On a percentage basis, GM ranks third behind both Honda and Toyota, but ahead of Ford, Chrysler and Nissan.  [Chart from Green Car Congress]

[Chart from Green Car Congress]

So, who get's the bragging rights? Well, I'm sure both GM and Honda will go ahead and claim them, but it's kind of up to you. Which do you value more: more vehicles on the road, or a higher percentage of that manufacturers sales?

In my opinion, if you sell a ton of Chevy Silveradoes along with your little Chevy Aveos, you really had better not be touting your commitment to selling 'green machines.' A percentage-based metric seems like a better gague of who's more committed to selling efficient vehicles, and that title goes to Honda.

Any thoughts?

Resources:

News From My Backyard: Portland Water Bureau Using B99 Biodiesel - Largest B99 Fleet In Country

Starting on September 26th, the Portland (Oregon) Water Bureau switched its fleet of diesel-powered vehicles to B99 (a fuel consisting of more than 99% bio-diesel), according to a Bureau press release. The bureau’s diesel-powered fleet has run on B20 (a 20% biodiesel / 80% petroleum diesel blend) since August, 2004.

Starting on September 26th, the Portland (Oregon) Water Bureau switched its fleet of diesel-powered vehicles to B99 (a fuel consisting of more than 99% bio-diesel), according to a Bureau press release. The bureau’s diesel-powered fleet has run on B20 (a 20% biodiesel / 80% petroleum diesel blend) since August, 2004.

“This effort makes the Water Bureau’s 84-vehicle diesel fleet the largest in the country running on B99,” says Portland City Commissioner Randy Leonard, “We’re doing our part to increase demand for biodiesel which will help to spur the development of Oregon-based production facilities, reduce greenhouse gas emissions, and reduce our reliance on foreign oil.”

Bureau Administrator David Shaff adds, “We’ve analyzed the fuels and talked to experts on fuels and truck performance. We’ve tested B99. We expect this change to be almost cost neutral. We’re also analyzing performance. The bureau purchases about 100,000 gallons of diesel fuel annually. Diesel vehicles carry stickers saying Powered by Biodiesel.”

In the winter months, the Bureau will modify the percentage of biodiesel to ensure that vehicles and equipment do not experience fuel gelling problems in colder weather, according to City.

According to the press release, the Water Bureau vehicles converting to B99 are the workhorses of municipal public works: backhoes, dump trucks, graders, excavators, water service trucks, welding and crane trucks, pick up trucks, compressors, forklifts, tractors, mowers, generators, work vans, passenger vans and some passenger vehicles. Some older vehicles will remain on B20 and vehicles at the Bull Run maintanence yard will run on a B50 blend, press release reports.

The City of Portland has been one of the more aggressive in the nation in the use of biodiesel. All city-owned diesel vehicles and equipment that use the City’s fueling stations have been powered by a B20 biodiesel blend since 2004, according to the City. Each year the City uses about 600,000 gallons of B20 in approximately 373 trucks, 166 pieces of construction equipment (backhoes, graders, excavators, etc.) and 62 towed units (compressors, generators, etc.), the City says.

According to the City, the conversion to a biodiesel blend began in 2001, when the City of Portland & Multnomah County’s Sustainable Procurement Strategy identified three target areas for improvement: biodiesel, hybrid vehicles, and vehicle purchasing performance standards. Soon after, Multnomah County conducted a one-year pilot study on the use of B20 in their fleet, showing promising results. By the August of 2004, both the City and the County had converted their diesel fleet vehicles to B20.

All City bureaus agreed to absorb the extra cost of switching to biodiesel - about $0.20 per gallon at the time - citing a number of benefits over traditional petrodiesel, including:

In light of the community health and environmental costs associated with these pollutants, the City decided that the price premium for the biodiesel was more than offset by the gains in improving local air quality

Additionally, in July 2006, the Portland City Council voted to approve a citywide renewable fuels standard requiring that all diesel sold in Portland contain 5% biodiesel (B5) and all gasoline contain 10% ethanol (E10), effective July 2007. In an effort to maximize the City’s own use of renewable fuels, they also created a binding City Policy formally requiring that all City-owned:

The city is now also requiring all solid-waste hauling franchisees—businesses awarded franchises by the city for the collection of solid waste—to use B20 biodiesel blends in their refuse vehicles.

The Portland-metro area transit authority, Tri-Met, has also expanded the use of biodiesel, and has used a B20 blend in all 210 of their LIFT service buses, which provide door-to-door service for elderly and people with disabilities, since May 2006 [see previous post].

I'm continually proud to live in the city of Portland. The committment across the board from city officials and leaders to making Portland one of the most sustainable cities in the world has been a source of pride for our city and has had real economic benefits as well, encouraging many 'green-minded' residents and businesses to make Portland their home.

My question now, with the expanded use of biodiesel across the city (and state), is where is it all coming from? Initially, the bulk of biodiesel utilzied in Oregon was provided by SeQuential Pacific Biofuels, who converts used cooking oils to biodiesel in a very efficient process performed in-state at their Salem biodiesel production facility. I'm curious how much of the biodiesel consumed in state continues to be produced in-state/in-region and is sourced from in-state or in-region supplies of used cooking oil, and how much of it comes from soy-based biodiesel from outside of the state. Obviously, the economic development benefits, process efficiency, and environmental benefits of biodiesel use are greater for Oregon when the product is produced in or around Oregon and is based on in-state or in-region feedstocks.

If the region wants to continue to reap the full benefits of the continued expansion of biodiesel consumption, it needs to develop strategies to increase in-region production of both feedstocks and fuels. The Northwest is unfortunately not well suited to soy cultivation. Canola could be grown in the region as a feedstock for biodiesel production, but it presents a problem as canola is closely related to a wide variety of high-value 'boutique' and specialty crops grown throughout much of the Northwest, and concerns about cross-polination have resulted in state policies that currently preclude growing canola in much of Oregon's prime agricultural lands. I know that the State Departments of Agriculture and Energy are working to develop a plan to safely allow some development of canola or other biodiesel feedstocks, if possible, but challanges remain.

But once again, bravo to Portland for this policy!

[A tip 'o the hat to Green Car Congress]

Tuesday, October 17, 2006

California to Link with East Coast RGGI States for Uniform Greenhouse Gas Market

[From Green Car Congress:]

[From Green Car Congress:]

In order to more efficiently reduce greenhouse gas emissions, California Gov. Arnold Schwarzenegger and New York Gov. George E. Pataki have agreed to explore ways to link California’s future greenhouse gas emission credit market and the Northeastern and Mid-Atlantic states’ Regional Greenhouse Gas Initiative (RGGI) upcoming market.

RGGI is a cooperative effort by Northeast and Mid-Atlantic states to design a regional cap-and-trade program designed to achieve a 10% reduction in greenhouse gas emissions from power plants by 2019.

The RGGI program will implement the nation’s first mandatory cap-and-trade program for carbon dioxide emissions. In addition to New York, other states signing the agreement to implement RGGI include: Connecticut, Delaware, Maine, New Hampshire, New Jersey, and Vermont. The State of Maryland also has adopted legislation to join RGGI by June 2007.

Earlier this year, a model set of regulations to implement RGGI were finalized. New York and the other six states who are participating in RGGI are able to use this model to draft the necessary regulations or legislation to implement this program in their own state. New York plans to propose its draft regulation in the next few months.

RGGI uses a flexible, market-based cap-and-trade system. Each state will issue one emissions credit or allowance for each ton of CO2 emissions allowed under the regional cap. These emissions credits will be traded as commodities.

Last month, Governor Schwarzenegger signed AB 32, California’s landmark greenhouse gas emissions legislation that established a program of regulatory and market mechanisms to achieve real, quantifiable, cost-effective reductions of greenhouse gases. (see earlier post.)

AB 32 requires the California Air Resources Board (CARB) to develop regulations and market mechanisms that will ultimately reduce California’s greenhouse gas emissions by 25% by 2020. Mandatory caps will begin in 2012 for significant sources and ratchet down to meet the 2020 goals.

The two governors discussed ways to link the two efforts to create a uniform carbon trading system.

Gov. Schwarzenegger also announced an executive order directing California Secretary for Environmental Protection Linda Adams to coordinate state climate change policy. The executive order, which will formally be signed on Tuesday, also instructs the secretary to work with the California Air Resources Board to seek to develop a framework that allows California to work together with RGGI. Such an arrangement would help build a large, robust carbon trading market.

This seems like smart policy. As much as possible, integrating the tradable environmental attribute markets - be they for CO2, NOx, SO2, or Renewable Energy Credits (RECs) - creates more robust and efficient trading markets. And, in the absence of unifying federal legislation capping carbon emissions, it's good to see as much cooperation as possible on the state level.

I'm consistently impressed with the leadership of these two Republican Governors (from 'blue' states) on issues pertaining to climate change, renewable energy development, and domestic energy security. Again, I ask you, Mr. President, what's your excuse for inaction?

Wednesday, October 11, 2006

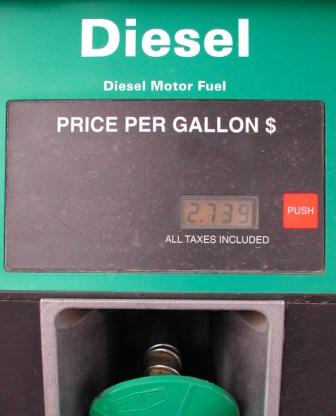

Transition to Low-Sulfur Diesel Fuel Nearing Completion

The New York Times reports today that the transition to low-sulfur diesel fuel is proceeding rapidly and trucks, buses and other diesel vehicles across the country can now fill up with the less polluting fuel. Perhaps the most significant revolution in highway fuels since the introduction of lead-free gasoline, the new ultra-low sulfur diesel fuel has just 3% of the sulfur previously found in diesel fuel.

The New York Times reports today that the transition to low-sulfur diesel fuel is proceeding rapidly and trucks, buses and other diesel vehicles across the country can now fill up with the less polluting fuel. Perhaps the most significant revolution in highway fuels since the introduction of lead-free gasoline, the new ultra-low sulfur diesel fuel has just 3% of the sulfur previously found in diesel fuel.

Like lead, sulfur generates air pollution that leads to severe health consequences. Like lead, it also gums up the works of fine-tuned pollution control devices, making it exceedingly difficult to produce cleaner-burning engines.

The new low-sulfur diesel fuel standards call for fuel with an average sulfur content not exceeding 15 parts-per-million (ppm), a 97% reduction from the previous sulfur content standards, 500 ppm.

The transition to low-sulfur diesel began in June 2006 in order to prepare U.S. diesel vehicles for the new stricter Federal Tier 2 emissions standards scheduled to be implemented in 2007. The low sulfur content enables the use of catalyst-based exhaust after-treatment pollution control devices that will further reduce emissions of particulates, oxides of nitrogen, and other diesel exhaust pollutants as the new Tier 2 emissions standards are phased in next year. The new fuel will thus pave the way for new generations of diesel engines that will eventually cut lethal particulate pollution from diesel tailpipes an estimated 95 percent, according to the Times.

The transition to the new fuel is occuring swiftly, according to the Times. As of Sunday, at least 80 percent of the diesel available for trucks and buses has to meet the new standard, the Times reports, and officials of the Environmental Protection Agency said Tuesday that the changeover was occurring so swiftly that 90 percent of the fuel would soon be compatible.

Apparently, the Bush administration is trying to claim this accomplishment as its own, ignoring the fact that the regulation has it's origins in the 1990's and the fact that it became effective in December 2000, before President Bush took office.

According to the Times, in a news conference in Columbus, Ind., the headquarters of Cummins Engine, a major manufacturer of diesel engines, the environmental protection administrator, Stephen L. Johnson, said, “Under President Bush’s leadership, the pumps are primed to deliver clean diesel and a cleaner future for America.”

The Times article continues: Old diesel engines burning the cleaner fuel will reduce dangerous particulate emissions by 10 percent, experts say. New engines with improved controls, which have to be available by Jan. 1, will cut this particulate pollution by more than 95 percent. The rule mandates more improved engines in 2010. It is unclear how soon existing trucks and buses, which often are in use for more than 10 years, will be turned in for newer models.